Retail Site Expansion - Between Spreadsheets and Black Boxes

Opening a new store is one of the most consequential decisions a retailer can make.

A good site can support years of growth, improve convenience, and strengthen network performance. A bad one can lock the business into underperformance, generate cannibalization, and waste capital. Yet many store location decisions are still made with tools and processes that are either too manual or too opaque.

That is the problem.

Retail site selection should not depend on Excel-heavy, ad-hoc analysis. But it should not depend either on black-box tools that produce a polished recommendation without allowing the business to understand the logic behind it. The right approach sits in the middle: standardized enough to be fast and repeatable, but transparent enough to be understood, challenged, and improved.

That is the logic behind white-box location intelligence.

Why the right tools matter

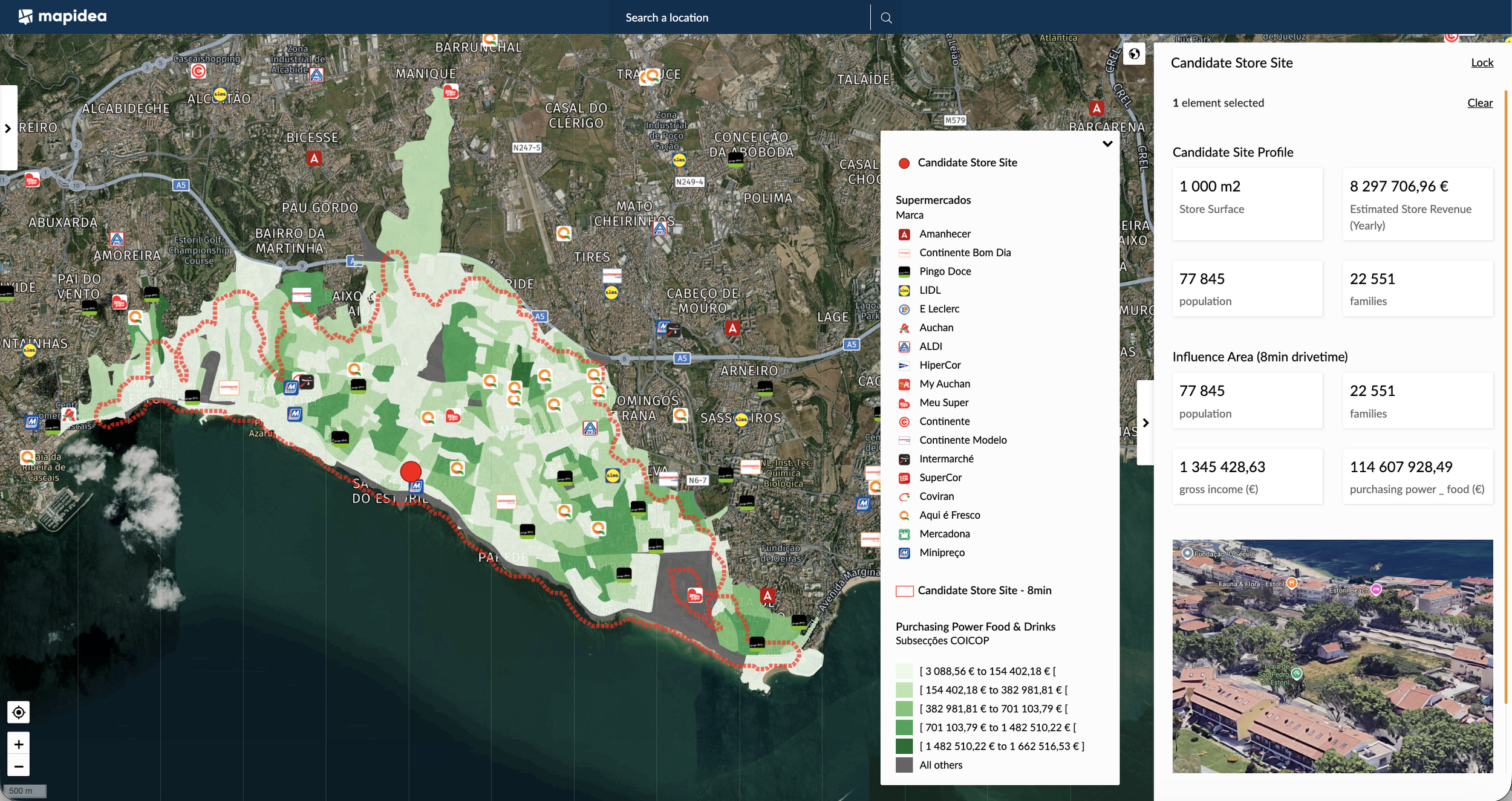

Store expansion is not just about spotting a promising point on a map. It is about evaluating a candidate location in the context of a real commercial network: existing stores, competitors, purchasing power, accessibility, cannibalization, footfall, and the strategic priorities of the brand.

That requires tools that support both rigor and speed.

Without the right tools, site selection becomes inconsistent and hard to scale. Different analysts use different assumptions. Different locations get evaluated in different ways. Reports may look similar, while the logic behind them is anything but.

With the right tools, the process changes. New sites can be assessed faster. Assumptions become explicit. Data becomes reusable. Stakeholders collaborate more easily. The business starts to build a repeatable decision capability instead of treating a location decision as a one-off exercise.

The two extremes that often fail retailers

In practice, many retailers fall into one of two unhelpful extremes.

1. Manual, ad-hoc analysis

This is still common. Analysts gather data from multiple sources, work extensively in Excel, create a few maps, assemble screenshots, and prepare a report based largely on individual effort and know-how.

That can work for a small number of cases, especially when the team knows the market well. But it does not scale.

It is slow, difficult to standardize, and expensive in analyst time. It is also hard to work with data geographically. Tools such as QGIS or ESRI ArcGIS are extremely powerful, but for many common business users they are also too complex for day-to-day commercial decision-making. As a result, many teams end up stuck between oversimplified spreadsheets and specialist GIS environments they cannot easily operationalize.

This makes iteration costly. A new competitor opens, a site shifts slightly, decision-makers ask for another scenario, and much of the analysis has to be rebuilt.

2. Black-box tools dressed as certainty

At the other extreme are tools and services that promise speed by generating an answer quickly, often wrapped in a visually attractive report and, increasingly, in the language of “using AI”.

That is a strong marketing pitch in the current environment. But beneath the charming naming, the logic is often opaque. The business is asked to trust a score, a ranking, or a recommendation without being able to critically assess the assumptions behind it.

That may look modern. It may even look reassuring. But it demands leaps of faith that can be harmful.

If the retailer cannot understand how a result was produced, how can it judge whether the model reflects its own format, customer behavior, competitive context, or local operating realities? How can it challenge weak assumptions? How can it improve the model over time?

Some solution providers implicitly ask clients to outsource not just the analysis, but the thinking. That is rarely a good idea for decisions this important.

The better approach: standardized, data-rich, transparent

A better model for retail expansion combines the best of both worlds.

It uses standardization to reduce effort and improve consistency. It uses a sound data environment to ground decisions in observable reality. It uses transparent estimation models so the business can understand what is happening. And it supports collaboration between analysts, real estate teams, store managers, and decision-makers.

In this approach, the goal is not to automate judgment away. The goal is to give judgment a better foundation.

It also means that advanced methods, including AI or machine learning, can absolutely have a role. But they should be used in a controlled and transparent way, as a complement to a solid base process, not as a substitute for business understanding.

Just as importantly, the process should not end when a store is approved. Once a location opens, real performance should feed back into the model. Expected catchment, cannibalization, and sales patterns should be compared against reality. That is how location intelligence becomes a continuous optimization capability, not just a site-selection exercise.

A simple white-box recipe for estimating a new store’s potential

Below is a simple but solid example of a transparent estimation model. It is not the only possible model, but it shows the logic of a white-box approach clearly.

Step 1 - Map the existing commercial landscape

Compile all relevant own stores and competitor stores

Include shop surface area for each location

Build a realistic picture of the existing retail supply around the candidate site

Step 2 - Prepare local demand data

Use demographics and purchasing power data for the relevant product categories

Organize this data by a small geographic unit, such as a block group or similar fine-grained statistical area

Treat these as the store’s potential Revenue Contributor Units

Step 3 - Define the store’s influence area

Set an isochrone adapted to the context

For example, a 10-minute drivetime in a mid-density urban area where car access dominates

Or an 8-minute walktime in a highly dense urban area where pedestrian access is more relevant

The influence area should reflect commercial reality, not an arbitrary default

Step 4 - For each Revenue Contributor Unit, calculate

The distance to the candidate store location

The total nearby shopping surface from existing stores within a chosen threshold, such as 5 to 10 minutes, excluding the candidate site itself

A Revenue Contribution Ponderator from 0% to 100%, reflecting:

shorter distance to the candidate store

lower nearby competing shopping surface

The Revenue Contribution from that unit, by multiplying the ponderator by the unit’s purchasing power

Step 5 - Estimate store revenue

Sum the Revenue Contribution of all units inside the influence area

The result is a first-pass revenue estimate for the candidate location

Step 6 - Refine the model over time

Add footfall data where available

Add retailer-specific knowledge and business rules

Calibrate influence areas and weighting logic using current store performance

Incorporate premium datasets such as NielsenIQ where relevant

This is not an exotic model. That is precisely its strength.

It is based on real data. It reflects objective business factors such as distance, competition, shop surface area, cannibalization, and local demand. Most importantly, it is understandable by humans and customizable to each retailer’s reality.

That makes it robust, discussable, and improvable.

From analysis to decision - and beyond

Once the analysis is complete, a report can be generated automatically and shared with stakeholders. Templates help reduce effort and improve comparability between sites. But the reporting structure should still adapt to the organization’s real needs.

And once again, the process should not stop there.

Actual store performance should feed back into the model so the business can improve future decisions. Over time, the organization builds not just a better process for choosing sites, but a better understanding of how its network behaves geographically.

That is where real advantage emerges.

Final thought

Retailers do not need to choose between slow spreadsheet-heavy analysis and fast opaque answers.

There is a better path: standardized workflows, strong data, transparent logic, business collaboration, and continuous improvement. It is fast enough to support decisions at scale, but open enough to remain aligned with the retailer’s real commercial dynamics. That is what store expansion requires.

To explore this kind of white-box location intelligence approach in your own expansion process, reach out to us.